Science is not a finished book. It’s more like a very long draft, constantly revised by people willing to challenge what came before. Most of us were taught certain frameworks in school as settled fact, delivered with the kind of confidence that makes questioning them feel unnecessary, even slightly impolite. Yet the history of science is full of moments where a widely held framework quietly, or not so quietly, started to crack.

That doesn’t mean science is unreliable. Quite the opposite. One of the very best things about science is that it is self-correcting: a scientist makes a set of observations, hypothesizes, and devises a theory to fit those observations. Other scientists then test it, and if it withstands scrutiny it becomes widely accepted. At any point, if contradicting evidence emerges, the original theory is discarded. What follows is a look at some of the most taught theories that have turned out to be incomplete, overstated, or outright wrong in important ways.

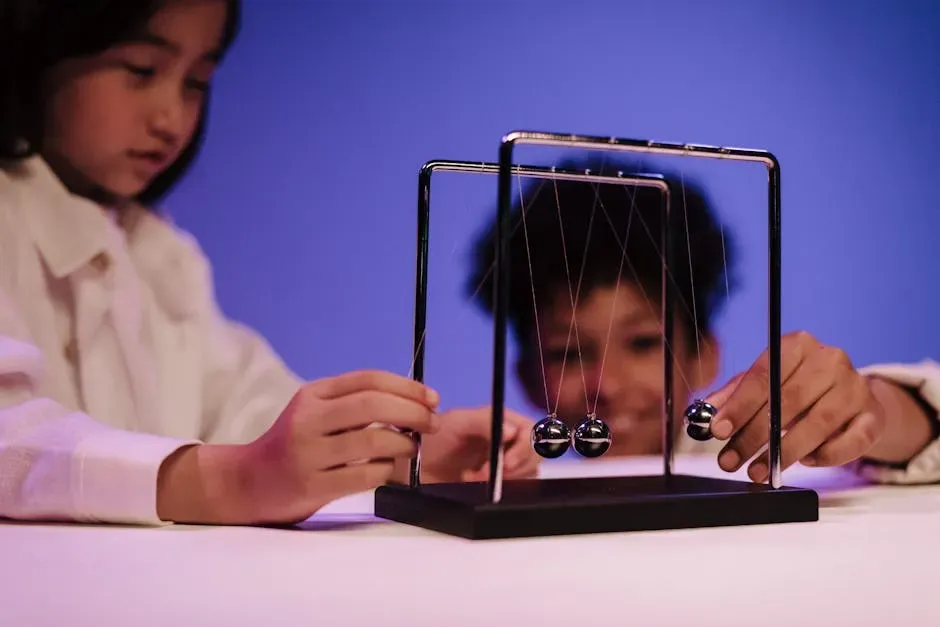

Newtonian Mechanics: Still Useful, but Not the Whole Story

Few frameworks have been more foundational to science education than Newton’s laws of motion and gravity. They work beautifully for everyday engineering and physics. Newtonian mechanics was extended by the theory of relativity and by quantum mechanics. Relativistic corrections to Newtonian mechanics are immeasurably small at velocities not approaching the speed of light, and quantum corrections are usually negligible at atomic or larger scales, so Newtonian mechanics remains totally satisfactory under most circumstances.

The trouble arises at the edges of reality. The anomalous perihelion precession of Mercury was the first observational evidence that relativity was a more accurate model than Newtonian gravity. Newton’s framework couldn’t explain it. Einstein’s could. That transition is now a textbook moment, yet many classrooms still present Newtonian physics as if it were a complete picture rather than a useful approximation with real limits.

The Standard Cosmological Model Under Pressure

Physics classrooms today still largely teach the Lambda-CDM model as the agreed framework for understanding the universe. The current standard model of cosmology is the Lambda-CDM model, wherein the universe is governed by general relativity, began with a Big Bang, and today is a nearly flat universe that consists of approximately five percent baryons, roughly a quarter cold dark matter, and the remainder dark energy. For a long time, this framework seemed robust.

Recently, however, that confidence has been complicated. Recent observational evidence seems to indicate significant tensions in Lambda-CDM, such as the Hubble tension, the KBC void, the dwarf galaxy problem, and ultra-large structures. Research on extensions or modifications to Lambda-CDM, as well as fundamentally different models, is ongoing. Tensions have emerged between the values of cosmological parameters estimated in different ways, raising the question of whether our model is simply too simple.

Dark Energy: A Constant That Might Not Be Constant

One of the most significant assumptions baked into modern cosmology is that dark energy behaves as a fixed cosmological constant. Dark energy, which makes up approximately sixty-eight percent of the universe, is hypothesized as the force driving the accelerated expansion of the cosmos, as evidenced by Type Ia supernova observations and cosmic microwave background measurements. That story has been taught as settled for decades.

Recent data has raised doubts. If the universe’s expansion is indeed slowing, the consequences would be huge. Scientists might be forced to review the well-established Standard Model of particle physics. What’s more, the trajectory hints at a new possible ending for the universe: a catastrophic “Big Crunch,” in which expansion eventually reverses and the entire cosmos collapses. These are not fringe ideas. They emerged from major surveys involving hundreds of researchers across multiple countries.

The Ego Depletion Theory: Willpower as a Fuel Tank

For years, psychology textbooks presented ego depletion as one of the field’s most reliable findings. For decades, ego depletion was taught as a central mechanism of self-regulation: exerting willpower in one task was said to reliably impair subsequent self-control. The idea that willpower works like a muscle that gets tired resonated with almost everyone who had ever stayed up too late making bad decisions.

The theory did not hold up under rigorous testing. Large preregistered, multi-laboratory replications have failed to support this general effect, reporting effect sizes indistinguishable from zero under standardized protocols. Current consensus holds that the strong resource-depletion model is unsupported. In 2021, a preregistered replication study with over 3,500 participants, performed by the original authors of ego-depletion studies, also found a non-significant effect very close to zero. It’s a telling case of an elegant theory outlasting its evidence.

Social Priming: The Mind Tricked by Words

Social priming was for a time considered a cornerstone of behavioral psychology. The basic claim was striking: exposure to certain words or concepts could unconsciously steer how people behave, without them realizing it at all. John Bargh’s 1996 study on social priming claimed that participants exposed to words related to old age walked more slowly afterward. Subsequent replication attempts, including a notable failure in 2012, have been unable to replicate these findings consistently, suggesting the original effects might have been overstated or due to methodological issues.

Automatic responses were said to be just as powerful in shaping behavior as more cognitively complex and considered ones. Critics charged that the original experimenters had ignored a long tradition of research focused on demand characteristics, the motivations at work in both subjects and researchers in the setting of the psychological experiment. The broader lesson is that a theory can seem compelling and generate dozens of supportive studies, while still being fundamentally fragile.

The Replication Crisis and Psychology’s Reckoning

The ego depletion and social priming problems are not isolated incidents. They reflect a much wider pattern that became impossible to ignore over the past decade. Over the last decade, psychology has undergone an extensive methodological reckoning commonly referred to as the replication crisis. Large-scale replication efforts, preregistered studies, and meta-scientific audits have demonstrated that many highly cited and routinely taught findings fail to reproduce under rigorous conditions, or reproduce only with substantially smaller effect sizes than originally reported.

The Reproducibility Project, initiated by Brian Nosek and the Open Science Collaboration in 2011, attempted to replicate 100 published studies from three leading psychology journals. The results, published in 2015, were startling: only about a third of the replications yielded statistically significant results, compared to nearly all of the original studies. This stark discrepancy cast doubt on the reliability of many high-profile findings. The implications for what gets taught in classrooms are still being worked out.

Phlogiston and the Lesson of a Theory That Predicted Well but Was Wrong

History offers a particularly instructive example of how a theory can seem to work and still be completely mistaken. Phlogiston was once the dominant scientific explanation for combustion. Scientists settled on something called phlogiston, described as the combustible part of a material, released whenever something burns or when metal rusts. That held up for a while, but not long. Rusting metal was heavier than the starting metal, which didn’t make sense if phlogiston was being lost, and by the late 1700s, Antoine Lavoisier proved that oxygen, not phlogiston, was involved in combustion and rusting.

The phlogiston story matters because it illustrates something scientists face regularly: a common way to gather evidence is to make a prediction and see if it’s correct. The problem occurs when the prediction is right but the theory used to make it is wrong. Phlogiston made some accurate predictions. That was enough to keep it alive for a surprisingly long time before better evidence dismantled it entirely.

General Relativity’s Own Unresolved Tensions

Einstein’s theory of general relativity sits at the foundation of modern physics and has passed every experimental test thrown at it within our solar system. Yet at larger scales, and at the quantum level, it begins to show its limits. Several challenges underscore the urgent need to explore modified gravity theories. General relativity struggles to reconcile with quantum mechanics, fails to provide fundamental explanations for dark matter and dark energy, and faces limitations in describing extreme regimes such as black hole singularities and the very early universe.

Researchers are not suggesting general relativity is simply wrong. They’re pointing out that it’s incomplete in ways that matter enormously for our understanding of the cosmos. It is fair to say that cosmology currently lacks a beyond-ΛCDM theory of sufficient stature, and until one emerges, there will probably remain a degree of puzzled disagreement as to the meaning of observational anomalies. That’s an honest position, even if it’s an uncomfortable one to hold when writing textbooks.

Misleading Evidence and the Problem of Prediction Without Truth

One of the subtler dangers in science education is teaching students that a successful prediction validates a theory. It often does, but not always. There are surprisingly few proven facts in science. Scientists often talk about how much evidence there is for their theories, and the more evidence, the stronger and more accepted a theory becomes. Scientists are usually very careful to accumulate lots of evidence and test theories thoroughly. Yet the history of science has some key examples of evidence misleading enough to bring a whole scientific community to believe something later considered to be radically false.

The pattern repeats across disciplines. A theory produces a striking result. Others confirm it. The idea enters textbooks. Then, years or decades later, better methods or a contradicting dataset appears, and the revision begins. The core feature of science is that it can self-correct. Any mature science has a history with older theories, findings, or measures that were replaced with better ones. Unfortunately, psychology in particular has lacked clear evidence of progress marked by a graveyard of discarded concepts that failed empirical support. That’s a problem worth taking seriously, particularly in fields that shape how people understand themselves.

What This Means for How We Teach Science

The deeper question is not whether any specific theory is right or wrong. It’s whether the way we teach science gives people the tools to understand that knowledge is provisional. These are only two of the vast number of misconceptions that some people still hold about psychology, some of which relate to theories that have been debunked for decades. Like any other science, progress in psychology is made through a process of theories being formulated, tested, and then either affirmed or superseded, but what happens among scientists can take a long time to filter through to the general population.

The replication crisis does not imply that psychology is fundamentally unscientific, nor that most findings are false. Rather, it refers to a documented pattern in which reported effects frequently fail to reappear in direct replications, replicated effects are substantially smaller than originally published estimates, and statistical significance is sometimes achieved through analytic flexibility rather than a stable signal. Learning to hold that kind of uncertainty without panic is, arguably, what genuine scientific literacy looks like.